The development of a wildfire risk classifier provides an instructive example of how geospatial intelligence projects evolve from raw data collection to actionable insights. A structured review of our second phase of work, focused on feature engineering, highlights several distinct lessons that are broadly applicable to GeoAI projects.

Temporal representation was the first challenge. Wildfire risk is inherently dynamic, unfolding over time rather than at isolated moments. Raw sensor readings only provide snapshots, which fail to capture patterns such as sustained heating or cumulative dryness. By introducing rolling averages of temperature, humidity, and wind speed, the classifier was able to recognize persistence in conditions. Short-term deltas provided awareness of accelerating changes that often precede ignition. Encoding cyclical time such as hour of day or day of week allowed the model to align with known diurnal and seasonal fire patterns. These temporal features ensured that the model’s perspective aligned with how risk accumulates and fluctuates.

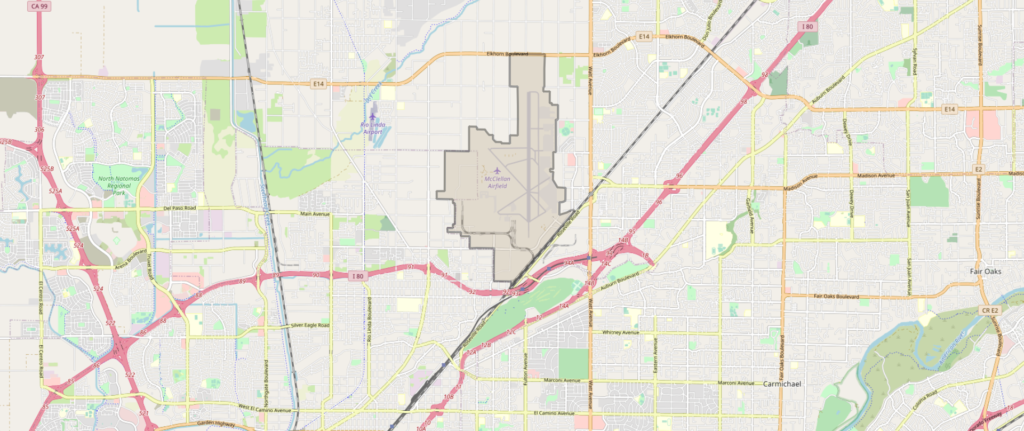

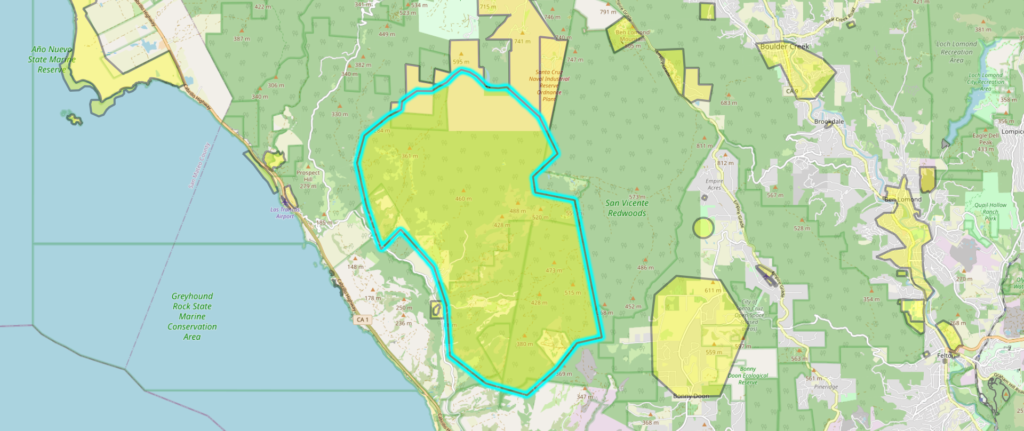

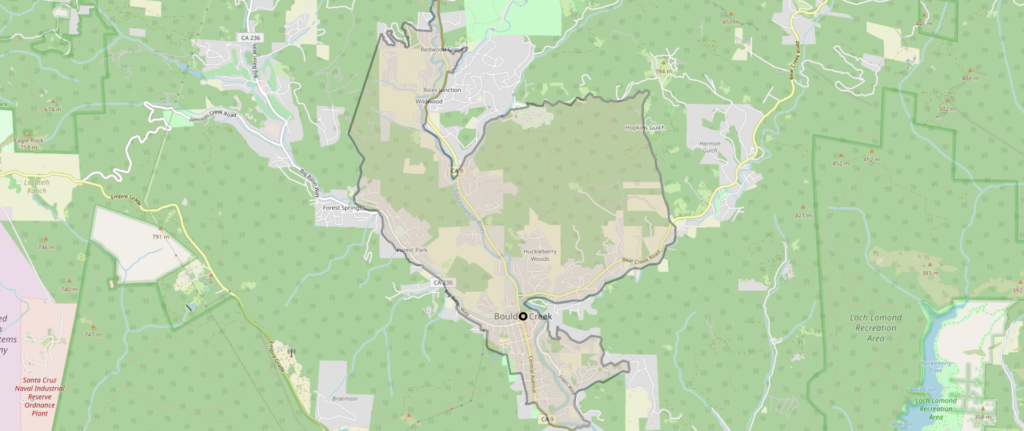

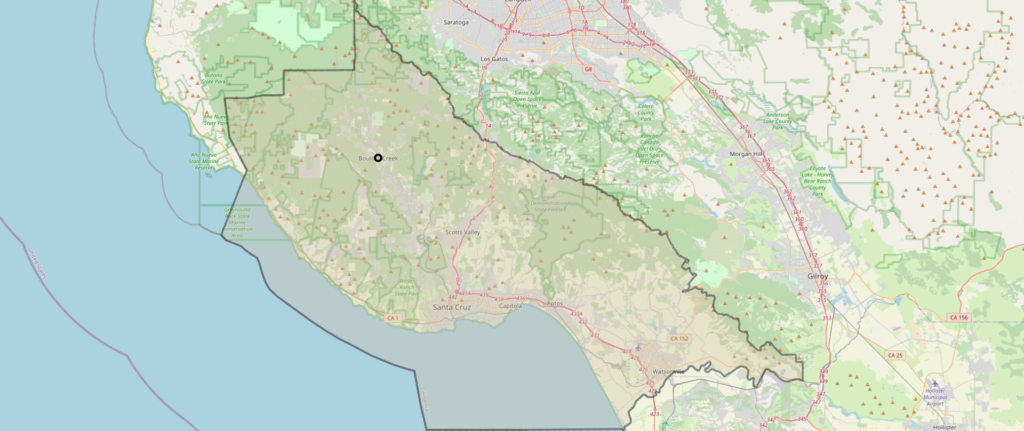

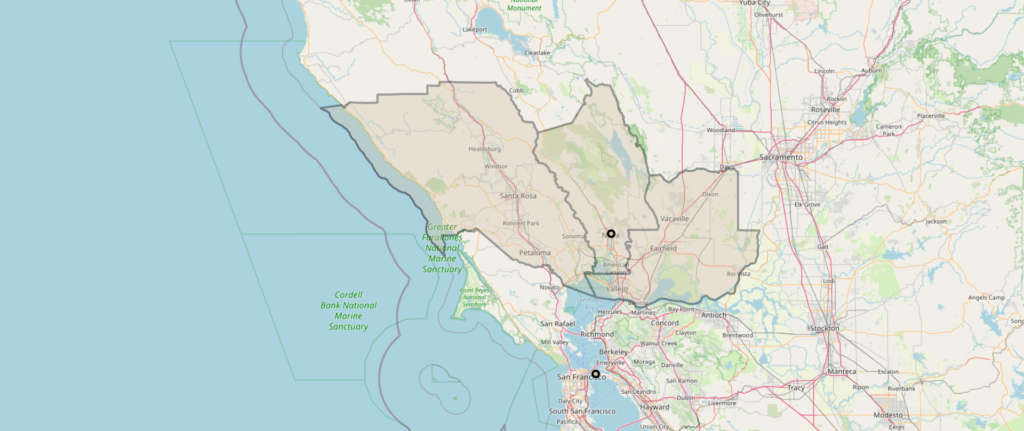

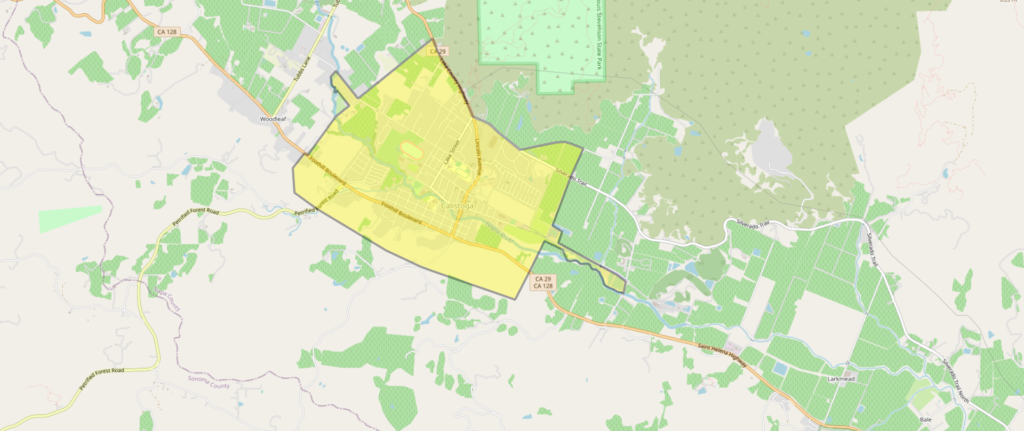

Spatial context proved equally important. Fires do not emerge in isolation, and sensor data must be interpreted within its surrounding environment. By aggregating readings across nearby sensors within one or two kilometers, we introduced consensus checks that improved robustness. Encoding land use and eco-region categories further refined the context, since vegetation type and ground cover strongly influence ignition and spread. Adding explicit geospatial information transformed the model from a point predictor into one capable of situating conditions within a landscape. Spatial awareness significantly reduced false positives that would otherwise arise from anomalous single-sensor readings.

Domain-derived interactions added further depth. Wildfire science offers relationships that cannot be inferred from raw data alone. Heat has greater significance when paired with high vegetation density, while wind combined with low humidity increases risk of rapid spread. These engineered interactions capture non-linear dynamics that single features cannot explain. In practice, this meant introducing composite variables such as thermal multiplied by vegetation density. By embedding expert knowledge directly into the feature space, the classifier gained explanatory power and produced outputs more consistent with field experience.

Asset proximity required particular attention. The original sensor feed contained discrete bins such as one, five, ten, or thirty kilometers, as well as infinity. While these bins provided a rough indication of distance to the nearest structure, they were poorly suited for continuous modeling. Our solution was to normalize these distances into a scaled score and treat infinite or missing values as absence of assets within fifty kilometers. This change allowed the variable to be meaningfully integrated both in the model itself and in post-decision rule adjustments. Careful preprocessing of categorical or discretized variables proved essential for unlocking predictive value.

The improvements from these engineered features were clear. Evaluation metrics, particularly precision–recall performance at low false-alarm thresholds, improved significantly. Just as importantly, the features aligned with the intuition of field operators. When the model raised risk alerts, the explanations matched observable environmental patterns, increasing trust and acceptance. This alignment between statistical performance and operational interpretability is central to successful deployment.

In conclusion, feature engineering was not a secondary detail but the core craftsmanship of this wildfire intelligence project. Temporal awareness, spatial context, domain-derived interactions, and refined handling of discrete proximity values combined to create a system that reflected the true dynamics of wildfire risk. Together, these elements transformed raw sensor data into actionable intelligence and provided a robust foundation for further advances in modeling and operational integration.